- #1

DoobleD

- 259

- 20

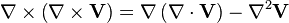

This is closely related to this thread I posted yesterday, but the question is different so I created another thread. There is a vector identity often used when deriving EM waves equation :

Then the grad(div(V)) part of it is simply dropped, assuming it equals 0. And I wonder why.

Is it because, since there is no "sources" here (no charges), any divergence is 0 ? Can this be proven more formally ?

Then the grad(div(V)) part of it is simply dropped, assuming it equals 0. And I wonder why.

Is it because, since there is no "sources" here (no charges), any divergence is 0 ? Can this be proven more formally ?